For most businesses their store locations change infrequently, so uploading a revised spreadsheet every few months is not an issue. But if you're managing a large number of stores, or information changes on a regular basis, it can be a little cumbersome. Especially so if your store location data is held in an internal database of some description - exporting it as a CSV file to then import it into your Blipstar database is rather inefficient. If only there was a simple way to sync your store locator with your internal database...

Well, there is a way - it's not particularly elegant but it works!

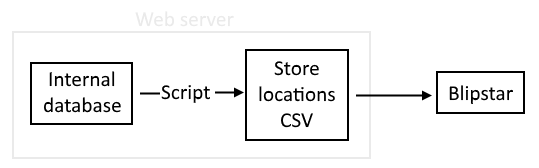

So what's involved? Basically (see screenshot)...

One nice thing about this approach is it doesn't matter what database you use (MySQL, SQL Server, Oracle...) or your language of choice (Java, PHP, Perl, Python, .Net, C#..) - as long as you can dynamically generate a CSV file at timed intervals (e.g. once a day) - you're in business. CSV is one of the simplest file formats going and most programming languages provide a way to query a database and output text to a file.

Tips:

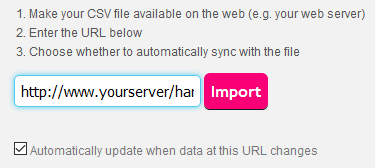

As an example we've put a test CSV file at the following URL: http://www.blipstar.com/_hard2guess-filename.csv. To link to a live file you...

That's it! As we said the process won't win any awards for innovation but for a platform-neutral way of syncing data it does the job. If you have any questions on setting up your live data link just let us know via the contact page. We also provide an option where you can FTP the CSV file if that works better for you - contact us for details.